Talk to Your GCP Services with AI: Model Context Protocol (MCP) Servers are Here!

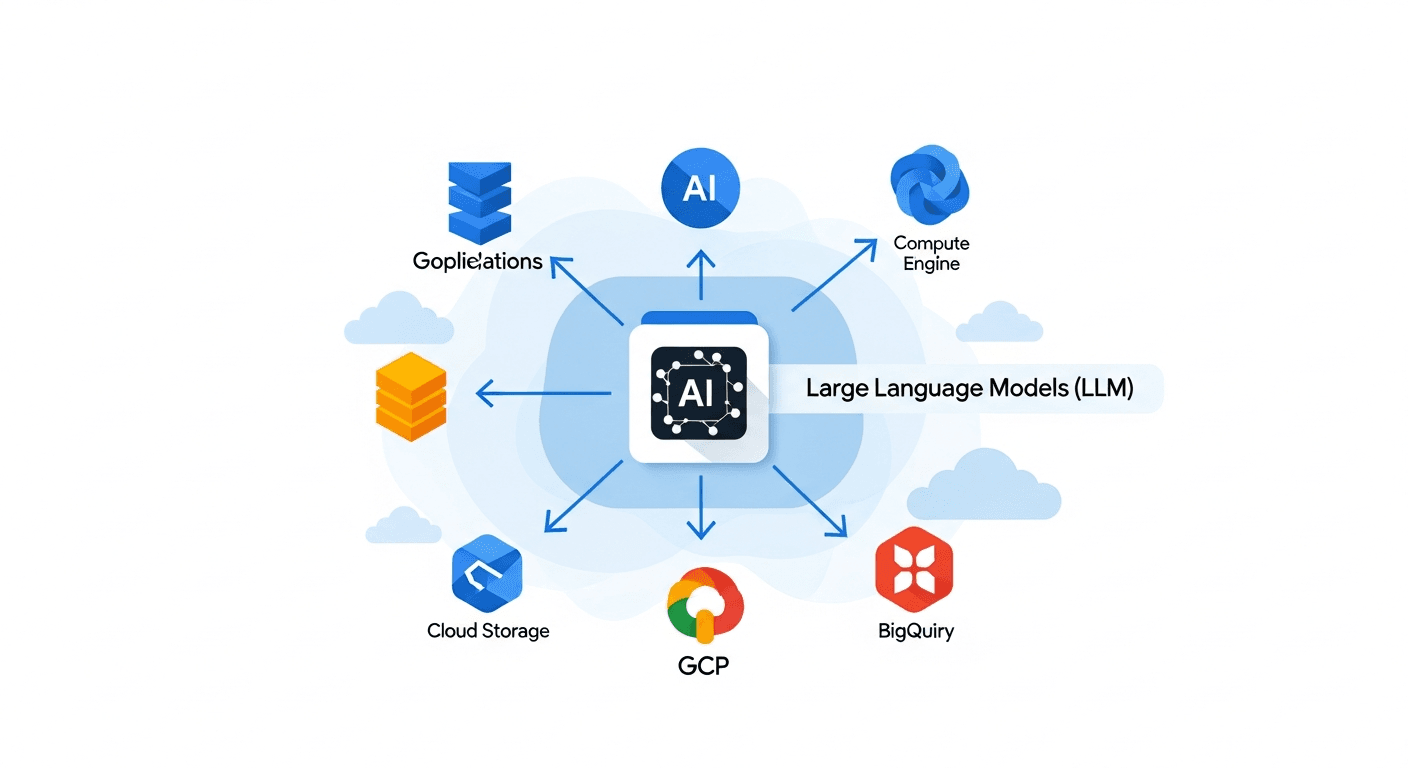

A new way for AI applications and LLMs to interact directly with your favorite Google Cloud services.

Alright, let's chat about something pretty cool that's just gone Generally Available across a bunch of Google Cloud services. If you're building anything with AI, especially with large language models (LLMs), this is going to make your life a whole lot easier. We're talking about Model Context Protocol (MCP) servers.

Think of it like this: traditionally, if you wanted an AI application to do something in GCP, like, say, read data from BigQuery or manage a Compute Engine instance, you'd have to write a bunch of code. You'd use client libraries, handle authentication, format requests, parse responses. It works, but it's a lot of boilerplate.

The Model Context Protocol (MCP) server changes all that. It's essentially a standardized way for your AI applications and LLMs to "talk" directly to GCP services. It understands AI language, so your models can describe what they want to do in a more natural way, and the MCP server translates that into actions within the GCP service.

This isn't just about making things a little bit simpler. This is a fundamental shift in how AI can interact with your cloud infrastructure. Your AI agents can now examine resources, generate SQL queries, securely execute them, and interpret results – all without you having to hand-code every single integration point.

It's available now for a bunch of services, which is awesome. We're seeing it pop up for things like:

- AlloyDB for PostgreSQL: So your AI can get insights and manage your AlloyDB clusters.

- BigQuery: Imagine an LLM that can directly query your data warehouse, generate new queries based on your prompts, and interpret the results for you. That's BigQuery MCP. And it's GA!

- Bigtable: Analyzing performance and metrics becomes super easy for your AI.

- Cloud Storage: Your AI can create buckets, read and write objects, list files. Very handy for data processing workflows.

- Compute Engine: AI agents can now manage your instances, disks, and snapshots. Pretty wild, right?

- Spanner: Interact with your global-scale database from your AI apps.

- Pub/Sub: AI can publish and subscribe to messages, enabling real-time data flows.

- Managed Service for Apache Kafka: Same idea, but for your Kafka streams.

- Memorystore for Redis and Valkey: AI can interact with your in-memory data stores.

- Cloud SQL: Get performance metrics and manage your relational databases with AI.

The impact here is huge. It means you can build more sophisticated, more autonomous AI agents that can directly interact with and manage your GCP environment. This really reduces the friction between your AI models and your cloud resources.

For example, a data analyst could ask an LLM, "Show me the sales trend for product X in the last quarter," and if that LLM is connected to BigQuery via the MCP server, it could generate the SQL, execute it, and present the findings. No need for the analyst to even touch the BigQuery console. That's pretty powerful stuff.

Honestly, it feels like a big step towards a more intelligent and responsive cloud. It's all about letting your AI do more of the heavy lifting.

To get started, you'll want to check the specific documentation for the GCP service you're interested in, like the BigQuery MCP server documentation. This is definitely something to explore if you're looking to push the boundaries of what your AI applications can do on Google Cloud.

Happy building!